Hello,

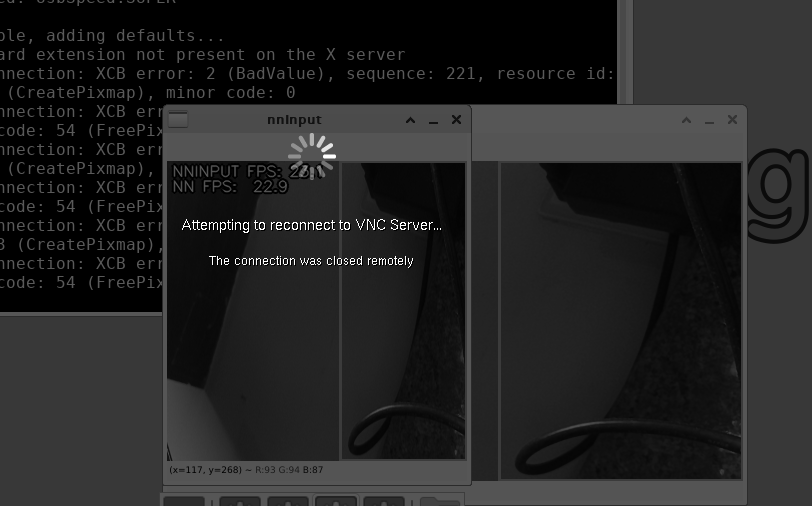

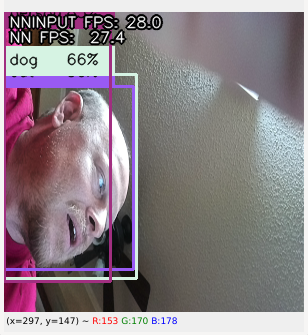

You can see I am having trouble w/ the tidl-tools:

debian@BeagleBone:~/TENsor$ ./dataOne.py

/home/debian/.local/lib/python3.9/site-packages/tensorflow_io/python/ops/__init__.py:98: UserWarning: unable to load libtensorflow_io_plugins.so: unable to open file : libtensorflow_io_plugins.so, from paths: ['/home/debian/.local/lib/python3.9/site-packages/tensorflow_io/python/ops/libtensorflow_io_plugins.so']

caused by: ["[Errno 2] The file to load file system plugin from does not exist.: '/home/debian/.local/lib/python3.9/site-packages/tensorflow_io/python/ops/libtensorf low_io_plugins.so'"]

warnings.warn(f"unable to load libtensorflow_io_plugins.so: {e}")

/home/debian/.local/lib/python3.9/site-packages/tensorflow_io/python/ops/__init__.py:104: UserWarning: file system plugins are not loaded: unable to open file: libte nsorflow_io.so, from paths: ['/home/debian/.local/lib/python3.9/site-packages/tensorflow_io/python/ops/libtensorflow_io.so']

caused by: ['/home/debian/.local/lib/python3.9/site-packages/tensorflow_io/python/ops/libtensorflow_io.so: cannot open shared object file: No such file or directory' ]

warnings.warn(f"file system plugins are not loaded: {e}")

TensorFlow version: 2.10.0-rc2

2022-10-10 22:06:03.415256: W tensorflow/core/framework/cpu_allocator_impl.cc:82] Allocation of 188160000 exceeds 10% of free system memory.

Epoch 1/5

1875/1875 [==============================] - 19s 7ms/step - loss: 0.2975 - accuracy: 0.9133

Epoch 2/5

1875/1875 [==============================] - 13s 7ms/step - loss: 0.1434 - accuracy: 0.9574

Epoch 3/5

1875/1875 [==============================] - 13s 7ms/step - loss: 0.1065 - accuracy: 0.9675

Epoch 4/5

1875/1875 [==============================] - 13s 7ms/step - loss: 0.0879 - accuracy: 0.9729

Epoch 5/5

1875/1875 [==============================] - 13s 7ms/step - loss: 0.0763 - accuracy: 0.9761

313/313 - 3s - loss: 0.0744 - accuracy: 0.9779 - 3s/epoch - 9ms/step

That is from this source:

# from some file on tensorflow's website...

#!/usr/bin/python3

import tensorflow as tf

print("TensorFlow version:", tf.__version__)

mnist = tf.keras.datasets.mnist

(x_train, y_train), (x_test, y_test) = mnist.load_data()

x_train, x_test = x_train / 255.0, x_test / 255.0

model = tf.keras.models.Sequential([

tf.keras.layers.Flatten(input_shape=(28, 28)),

tf.keras.layers.Dense(128, activation='relu'),

tf.keras.layers.Dropout(0.2),

tf.keras.layers.Dense(10)

])

predictions = model(x_train[:1]).numpy()

predictions

tf.nn.softmax(predictions).numpy()

loss_fn = tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True)

loss_fn(y_train[:1], predictions).numpy()

model.compile(optimizer='adam',

loss=loss_fn,

metrics=['accuracy'])

model.fit(x_train, y_train, epochs=5)

model.evaluate(x_test, y_test, verbose=2)

probability_model = tf.keras.Sequential([

model,

tf.keras.layers.Softmax()

])

probability_model(x_test[:5])

So, the .so file is missing but it still runs somehow?

Seth

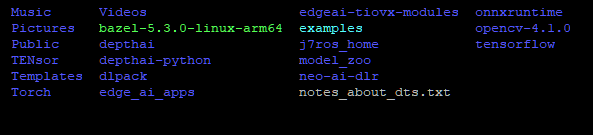

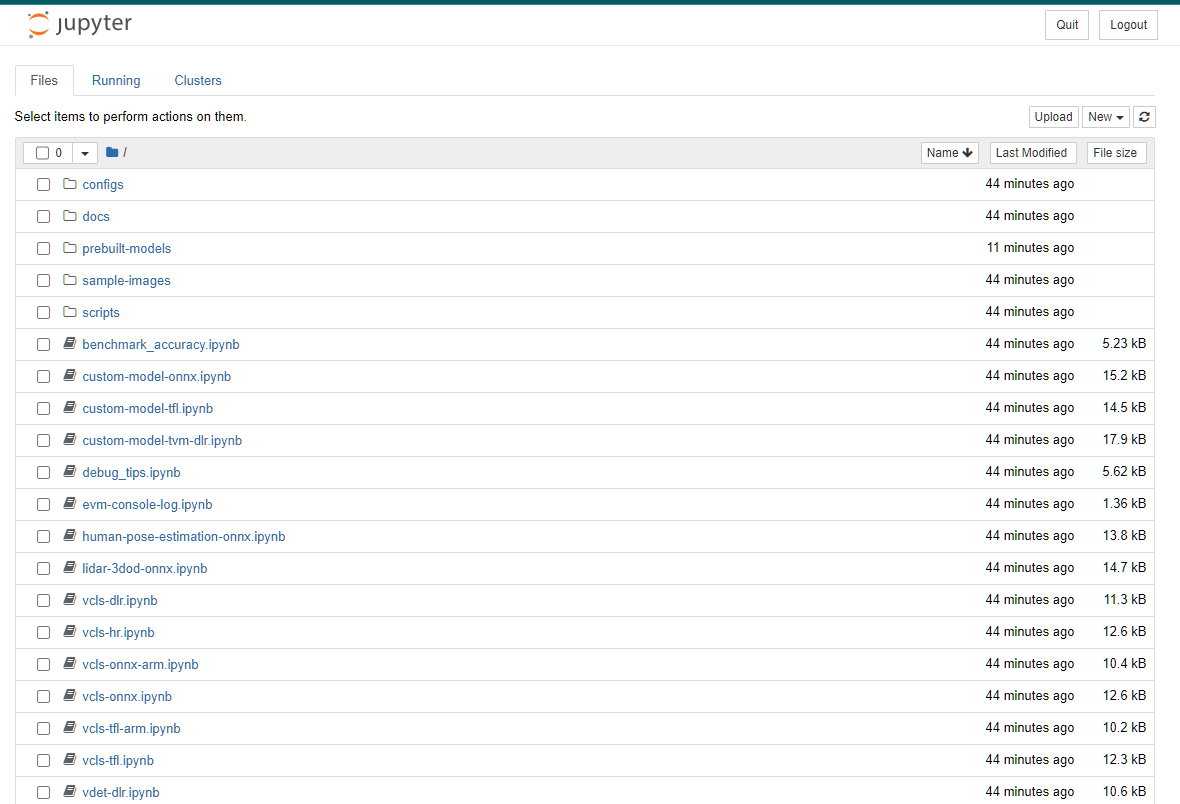

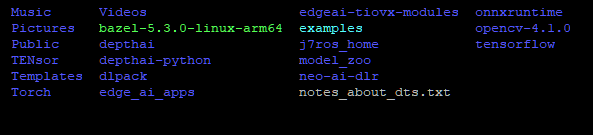

P.S. Please view this photo to see what files are listed on my /home/debian/ file.

I am not sure if it is easy to see but there are many libs. on the machine so far that will not build correctly. There…it should be a bit easier to read now.

See edge_ai_apps and onnxruntime. They both stop at about 65% to 100% depending on the time compiling.

It should be as easy as make sdk for the vision apps but it is not when trying from scratch.